Generalization Error via the Replica Method: Learning in the Hidden Manifold Model

This post is an annotated writeup of a final project for Information and Physical Computing (CSDS/PHYS). The central question: in the high-dimensional limit (features $p$, samples $n$, and latent dimension $d$ all large with fixed ratios), what is the exact generalization error of a linear student model trained on data generated by a nonlinear teacher?

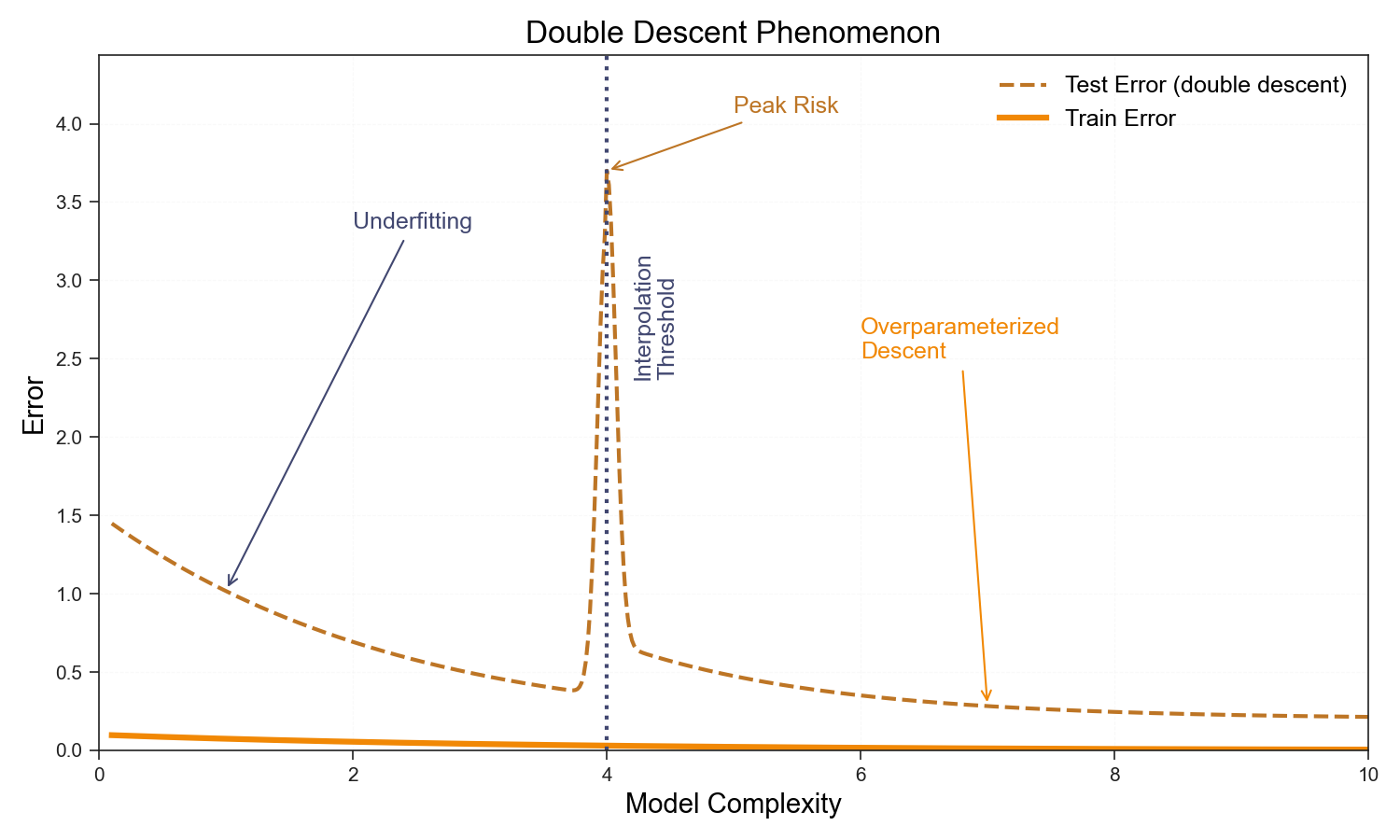

The answer—derived via the replica method—reveals the celebrated double-descent phenomenon as a consequence of the structure of the loss landscape near the interpolation threshold $p/n \approx 1$.

Setup: Hidden Manifold Model

Data is generated by a two-step process:

- Draw a low-dimensional latent vector $\mathbf{c}^\mu \sim \mathcal{N}(0, I_d)$.

- Project and apply a nonlinearity to get observations: $\mathbf{x}^\mu = \sigma!\left(\frac{1}{\sqrt{d}} F^\top \mathbf{c}^\mu\right)$, where $F \in \mathbb{R}^{d \times p}$ is a random matrix.

- Generate labels via a teacher direction $\theta^0$: $y^\mu = f^0!\left(\frac{1}{d}\,\mathbf{c}^\mu \cdot \theta^0\right)$.

This “hides” the linear structure inside a random nonlinear projection. The student only sees $(\mathbf{x}^\mu, y^\mu)$ and must recover the direction $\theta^0$ in latent space.

Key Results

The generalization error in the thermodynamic limit depends only on three scalar overlap parameters ($m_s, q_s, q_w$) which satisfy self-consistent saddle-point equations. For regression:

\[\epsilon_g = \rho + Q^\star - 2M^\star\]where $Q^\star = \kappa_1^2 q_s^\star + \kappa_\star^2 q_w^\star$ and $M^\star = \kappa_1 m_s^\star$. For classification, it reduces to the angle between the student and teacher directions:

\[\epsilon_g = \frac{1}{\pi}\cos^{-1}\!\left(\frac{M^\star}{\sqrt{Q^\star}}\right)\]The double-descent spike at $p/n \approx 1$ is visible in the fixed-point solutions when regularization $\lambda$ is small.

Double Descent

Interactive Notebook

The full derivation (Gibbs measure → replica trick → Gaussian equivalence → replica-symmetric saddle point → fixed-point iteration) is in the interactive notebook below. You can explore how regularization $\lambda$, the nonlinearity $\sigma$, and the noise level $\Delta$ affect the generalization error surface.

If the interactive notebook does not load, open it directly: full notebook.

Reference

Gerace, F., Loureiro, B., Krzakala, F., Mézard, M., & Zdeborová, L. (2021). Generalisation error in learning with random features and the hidden manifold model. ICML 2021.